Math3ma

What is a Good Quantum Encoding? Part 1

Over the past couple of years, I've been learning a little about the world of quantum machine learning (QML) and the sorts of things people are thinking about there. I recently gave an high-level talk on some of these ideas in connection to a December 2024 preprint called "Towards Structure-Preserving Quantum Encodings", coauthored with collaborators at Deloitte (Andrew Vlasic and Anh Pham) and MIT (Arthur Parzygnat). I spoke on this at the AWM Research Symposium this past May and have decided to write it up in a series of blog posts, as well.

In short, our preprint translates one aspect of an open problem in QML into the language of category theory, in hopes that by casting the problem in a more mathematically-formal light, we might be led to new tools and techniques that could help. I'll explain that in this series of articles.

Of course, the real question is: Did this help the QML problem? Right now, it's too early to know. Our preprint was written to a QML audience, and we assumed no familiarity with category theory. Still, it takes a while for ideas to spread. So, one goal for writing this series is just to get these ideas "out there" and see if they're of interest to anybody.

Another goal is just to share with everyone some topics that I personally think are interesting.

So here we go!

Magnitude, Enriched Categories, and LLMs

It's hard for me to believe, but Math3ma is TEN YEARS old today. My first entry was published on February 1, 2015 and is entitled "A Math Blog? Say What?" As evident from that post, I was very unsure about creating this website. At the time, writing about mathematics seemed very niche — though, I guess it still is — and the thought of sharing my musings publicly was quite intimidating. But as I mentioned in that first article, I started Math3ma simply as a study tool for learning graduate-level mathematics. (Hence the name: "mathema = lesson" in Greek.) And yet I had lots of doubts about whether my articles would resonate with anyone. So the tone in that first article was pretty apologetic. Needless to say, I've been amazed by all that's happened in the past ten years because of Math3ma. Thanks to all of you who've visited the site over the years!

What is Superposition, Really?

The next episode in the fAQ video podcast is now up! As mentioned last time, this is a new project I've embarked on with Adam Green where we chat about different ideas in quantum physics and (at some point) AI. Our primary goal is simply to help make these ideas more accessible to wide audiences — especially to folks who may've heard about certain words in, say, the popular media, but who may not have a technical background and who aren't really sure what those words mean.

We thought it'd be good to launch the podcast with some basic, fundamental ideas that can be used as a foundation for discussing real-world application in future episodes. Last time we introduced the topic of qubits, and today we're focusing on another basic topic, namely superposition.

So, what is superposition? We spend nearly an hour on this question, so I won't spoil it all for you! But there are a few remarks I can't resist sharing here.

New Video Podcast: fAQ

In a bit of fun news, I've just launched a new video podcast with my coworker Adam Green. This new video series, which we're calling fAQ, consists of casual conversations between me and Adam on basic ideas in quantum physics and eventually some topics in AI. (Hence the "A" and "Q," which is also a hat tip to our employer, SandboxAQ.) The target audience is very broad and includes any curious human who wants to learn more about these ideas. Our hope is that these informal chats might help demystify some ideas in math, physics, and their applications and make the concepts more accessible to wide audiences.

Adam is a biologist by training, an excellent science communicator, and before joining Sandbox he was the Director of US Academic Content at Khan Academy. He's now the Head of Science education at Sandbox, and since neither of us are physicists, we're essentially working together to learn new things and are inviting anyone to join us!

Our plan is to spend the first few episodes discussing fundamental ideas, just to lay down some ground work, and then we'll see where things go from there. So, without any further ado, here's a short seven minute "trailer" video we made to introduce the podcast.

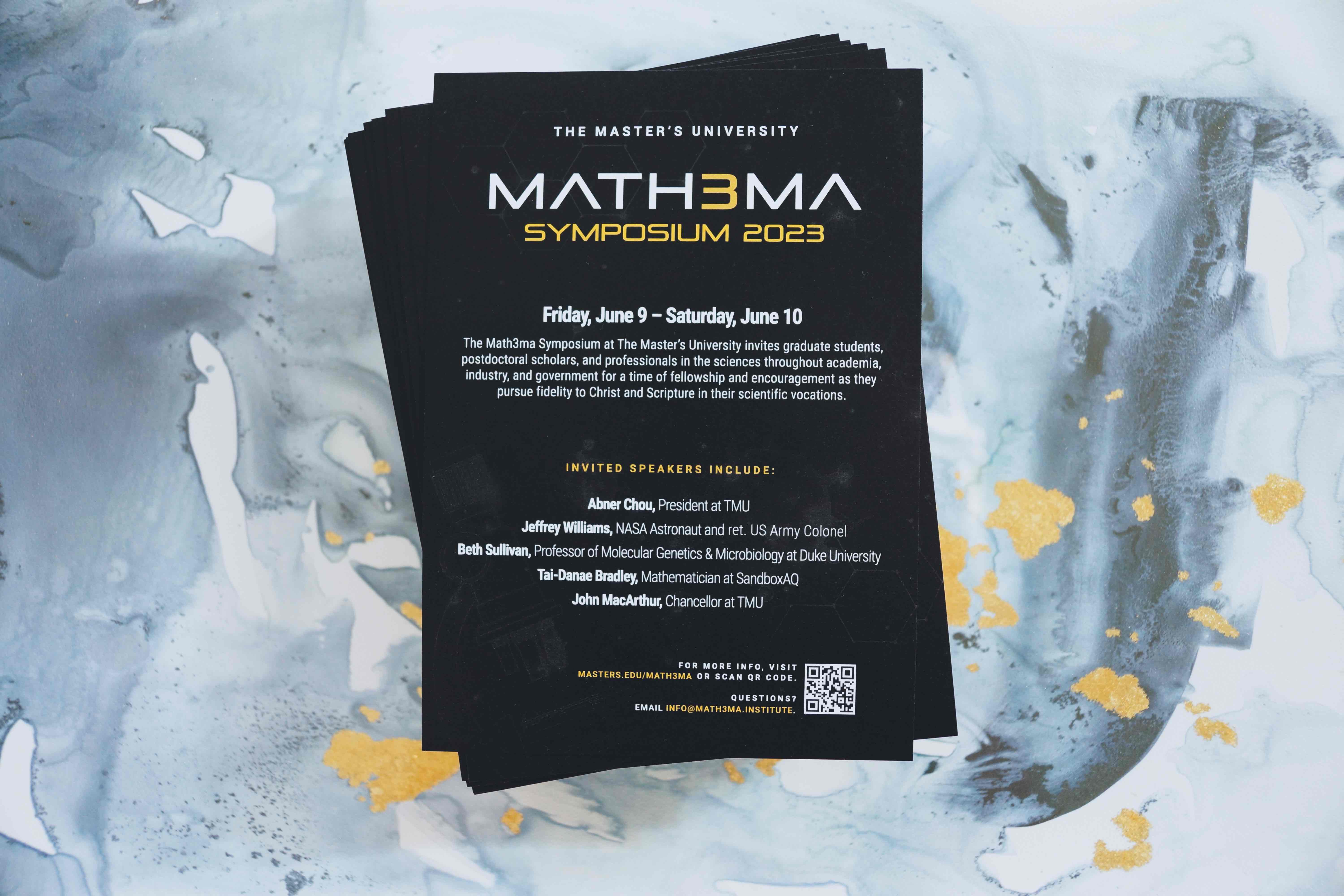

Symposium at The Master's University

Recently on The Math3ma Institute's blog, I announced an upcoming event that will be hosted at The Master's University (TMU), which is a small private university in Santa Clarita, California. I wanted to briefly mention it here, too, in case it might be of interest to any readers.

This summer on June 9–10, I'll be joined by NASA astronaut Jeffrey Williams and molecular geneticist Beth Sullivan (Duke University) for a two-day symposium, which invites folks with vocations in a wide range of scientific disciplines from academia, industry, and government for a time of fellowship, encouragement, and the opportunity for dialogue and discussion. We're also honored to be joined by theologian Abner Chou, the president of TMU, as well as John MacArthur, the chancellor of TMU and the pastor of Grace Community Church in Los Angeles.

If you're interested to learn more, details and registration are now available at: www.masters.edu/math3ma.